Data-Center Contingency Planning

As information technology has grown, data centers have become a crucial, even mission-critical, part of plant operations. As such, they have become much larger, with considerable electrical loads that produce more parasitic heat and the need for more cooling. As a result, energy usage and efficiency have grown to be significant concerns.

Nearly every month, it seems, a new technical article or whitepaper enlightening us about design changes for infrastructure devices in server systems is issued. These publications are thorough, logical, and very technical, covering topics such as energy-use reduction, rack design and density, in-rack cooling, in-room ventilation improvements, power-usage effectiveness, types of air-conditioning systems to consider, economizer design, and local operational responsibility between management-information-systems (MIS) and site facilities personnel. As these systems have grown in size and become a critical part of site operational integrity, awareness has followed. There is a much higher level of concern about data-center efficiency and reliability on the part of corporate executives.

Field Applications

We conduct energy- and utility-system assessments for commercial and industrial facilities. Part of that scope generally includes critiquing MIS operations and performing pragmatic operational audits of HVAC systems and stand-alone units providing cooling to server rooms so outages do not occur.

From an electrical-reliability and energy-efficiency perspective, most data centers have most all of the bases covered: uninterruptible-power-supply equipment with power-quality monitoring, dual power feeds, battery power for short blips, emergency diesel generators, redesigned in-rack ventilation, flow-through room air movement, and electronic-conditions monitoring for temperature, humidity, and voltage. The one crucial component of total systems we often see missing is redundant reliability of HVAC units that produce cooling for server rooms.

Generally, we find an in-room temperature indicator (thermometer) and/or electronic temperature monitor. Occasionally, there even is a humidity sensor with high and low limits identified per ASHRAE guidelines. Quite often, these sensors will send an alarm condition via the Internet to the corporate MIS department in New York, Atlanta, or Los Angeles. Should an alarm condition occur, the local plant HVAC technician eventually will receive a work-order call from the local data-center-team leader, who received a notice on his laptop from MIS headquarters in Chicago. Wow, this modern electronic-monitoring highway certainly is effective.

Recently, during an energy audit at a large pharmaceutical facility, I learned the 8-year-old 12-ton packaged direct-expansion unit located on an adjacent rooftop had crashed on a Friday afternoon in July. The motor failed because of a fouled coil and high head pressure on the compressor. Crisis? You bet—no backup air-conditioning unit (ACU)! I asked how the incident was handled.

“We knocked down a 16-ft section of the wall enclosing the server room, opened all of the doors to the adjacent offices, and used six large man-cooler fans from the manufacturing bays to circulate cool air from the aisleway and those nearby office spaces,” I was told.

It took the local HVAC contractor eight hours to respond, replace the defective compressor, purge the system, add new refrigerant, clean the coils, make adjustments, and get the air-handling unit (AHU) back in operation. That certainly exceeded the two-hour limit for the server room to be without cooling. Server operation (and plant production) were curtailed somewhat during the unplanned outage.

Surprisingly, four months later, at the time of my visit, the owner had yet to develop a written contingency plan in the event the 8-year-old stand-alone unit would fail again.

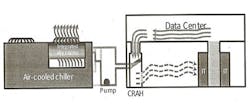

In earlier years, as server rooms grew in size, a standard air-conditioning design consisted of two or even three very reliable stand-alone units strategically located inside of a room. If one unit needed servicing, the other unit(s) (at full load) could alleviate a crisis. There may even have been a service contract with the manufacturer in the event of an emergency. These days, many computer-room air conditioners (CRACs) are of a split-system design, taking up less space at reduced noise levels. Out of sight sometimes can be out of mind.

Lessons to Be Learned

If your facility is older and/or does not have 100 percent standby ACU capacity for the server room, take heed; what can go wrong quite often will—at the worst-possible time. Engineering needs to help MIS analyze and develop a contingency program.

Our recommendations for developing a data-center ACU contingency plan includes:

- Send directly to plant maintenance and security any alarm condition related to the failure of an AHU supplying the server room.

- Handle similarly an alarm of high temperature or high humidity in the server room.

- Communicate these alarm situations as top-priority emergency-response matters.

- Have in place a written and tested procedure providing to the server room emergency backup cooling using portable air-conditioning equipment from a local supplier. That equipment should be guaranteed to be on site within two or three hours.

- Have at the ready a list of the names and phone numbers of plant personnel (and the local HVAC contractor) who will respond to an emergency anytime day or night.

- Schedule thorough, semi-annual maintenance of ACUs and controls.

System designs that optimize server-room energy efficiency and operational reliability and contribute to corporate sustainability and climate initiatives certainly are noteworthy achievements. We applaud companies that have made information widely available and raised awareness of the importance of data-center operations. A server room with an unreliable cooling system can compromise all of these remarkable new improvements. Backup power is and has for years been a basic MIS strategy; rarely does a system go down because of an electrical issue. Cooling systems need similar design and redundancy.

Summary

One facility in four we visited recently had an operational-reliability risk involving the air-conditioning system supplying the MIS server room.

The equipment supplying cooling to your data center requires the same level of quality, reliability, instrumentation control, and redundancy as the unit supplying the plant-operations manager’s or vice president’s office. Appreciate that these systems generally have a reliable life of 10 years.

If your data center is mature and/or does not get regular thorough servicing by expert air-conditioning technicians, we highly recommend:

- A thorough assessment of HVAC-equipment reliability.

- Sending operational alarms to the maintenance department.

- Develop a written contingency plan for a serious failure.

- Electronic surveillance security protection for remote ACU equipment.

An engineering consultant with JoGar Energy Services, Gary W. Wamsley, PE, CEM, has more than 40 years of experience with industrial boiler and process burner systems. He is a member of HPAC Engineering’s Editorial Advisory Board.

Did you find this article useful? Send comments and suggestions to Executive Editor Scott Arnold at [email protected].